Hive远程连接

要配置Hive远程连接,首先确保HiveServer2已启动并监听指定的端口

hive/bin/hiveserver2

检查 HiveServer2是否正在运行

# lsof -i:10000

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

java 660 root 565u IPv6 89917 0t0 TCP *:ndmp (LISTEN)

1.默认方式远程连接Hive

如果Hive 运行在与 Hadoop集成的环境中,HiveServer2可以与Hadoop中的用户验证机制集成,并且会使用已经验证的Hadoop用户凭据来进行身份验证和授权。

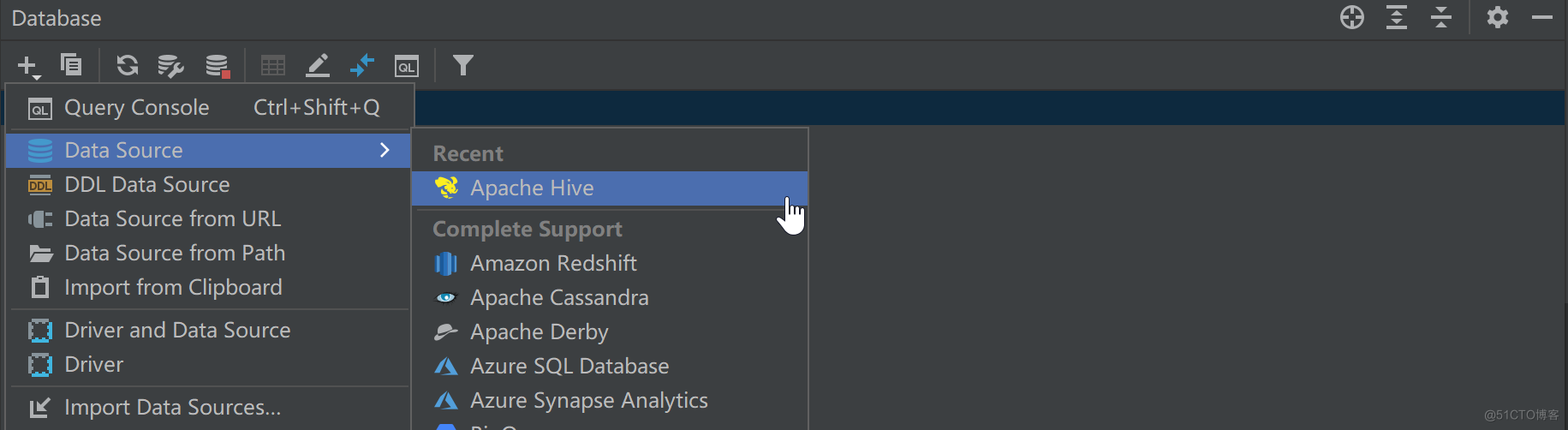

在IDEA的Database菜单栏如下操作,添加Hive连接

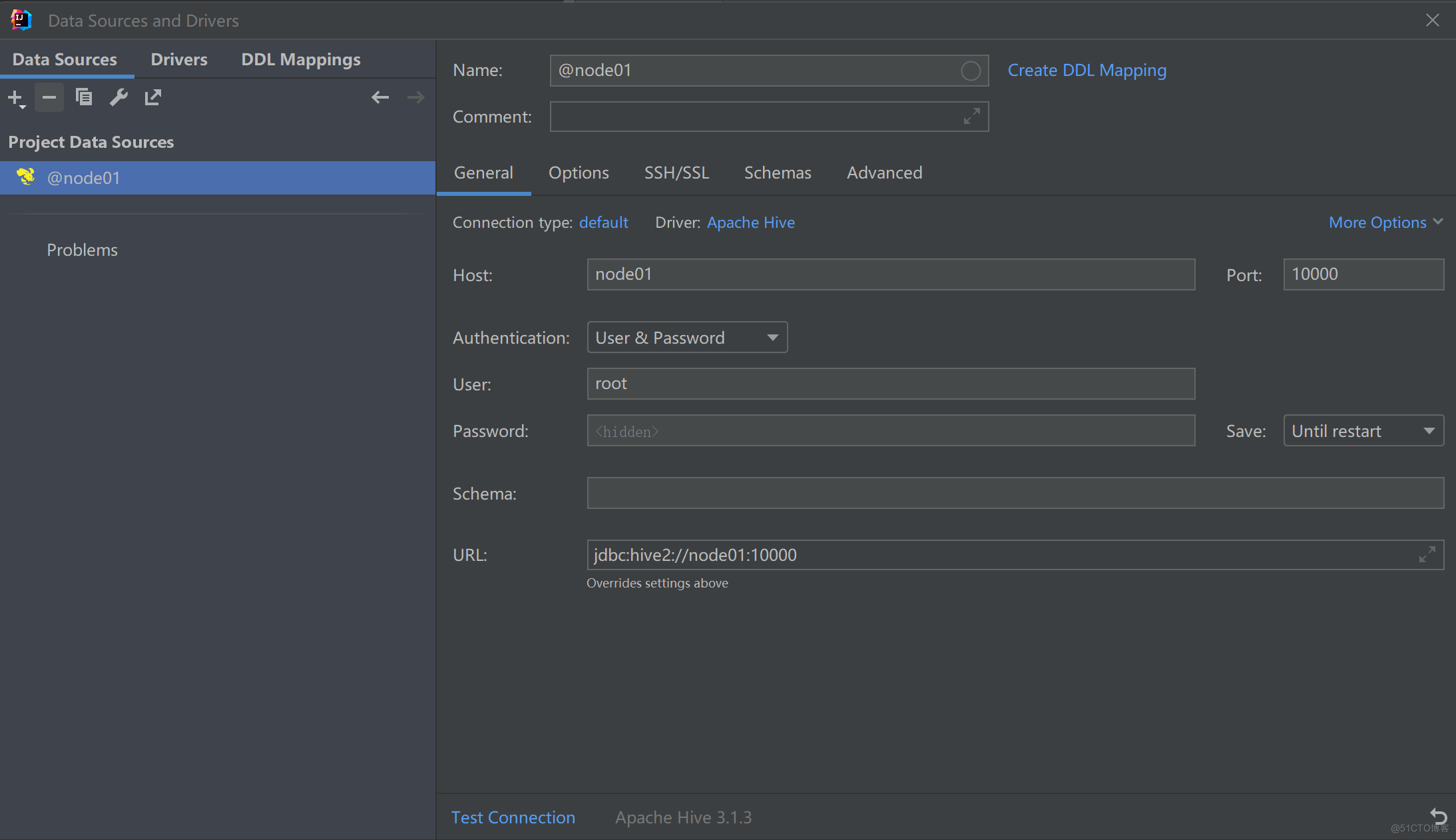

填写Hive地址,以及Hadoop中使用的用户名

填写Hive地址,以及Hadoop中使用的用户名

注意:初次使用,配置过程会提示缺少JDBC驱动,按照提示下载即可。

点击Test Connection测试,发现连接Hive失败,同时hiveserver2后台日志提示:

WARN [HiveServer2-Handler-Pool: Thread-47] thrift.ThriftCLIService (ThriftCLIService.java:OpenSession(340)) - Error opening session:

org.apache.hive.service.cli.HiveSQLException: Failed to open new session: java.lang.RuntimeException: org.apache.hadoop.ipc.RemoteException(org.apache.hadoop.security.authorize.AuthorizationException): User: root is not allowed to impersonate root

at org.apache.hive.service.cli.session.SessionManager.createSession(SessionManager.java:434)

at org.apache.hive.service.cli.session.SessionManager.openSession(SessionManager.java:373)

at org.apache.hive.service.cli.CLIService.openSessionWithImpersonation(CLIService.java:195)

at org.apache.hive.service.cli.thrift.ThriftCLIService.getSessionHandle(ThriftCLIService.java:472)

at org.apache.hive.service.cli.thrift.ThriftCLIService.OpenSession(ThriftCLIService.java:322)

at org.apache.hive.service.rpc.thrift.TCLIService$Processor$OpenSession.getResult(TCLIService.java:1497)

at org.apache.hive.service.rpc.thrift.TCLIService$Processor$OpenSession.getResult(TCLIService.java:1482)

at org.apache.thrift.ProcessFunction.process(ProcessFunction.java:39)

at org.apache.thrift.TBaseProcessor.process(TBaseProcessor.java:39)

at org.apache.hive.service.auth.TSetIpAddressProcessor.process(TSetIpAddressProcessor.java:56)

at org.apache.thrift.server.TThreadPoolServer$WorkerProcess.run(TThreadPoolServer.java:286)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:750)

解决方案:

在

Hadoop/etc/hadoop/core-site.xml文件中添加如下配置,然后分发到各个节点

注意:root:指Hadoop组件在运行时使用的用户名,根据自身配置修改

</property>

<property>

<name>hadoop.proxyuser.root.hosts</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.root.groups</name>

<value>*</value>

</property>

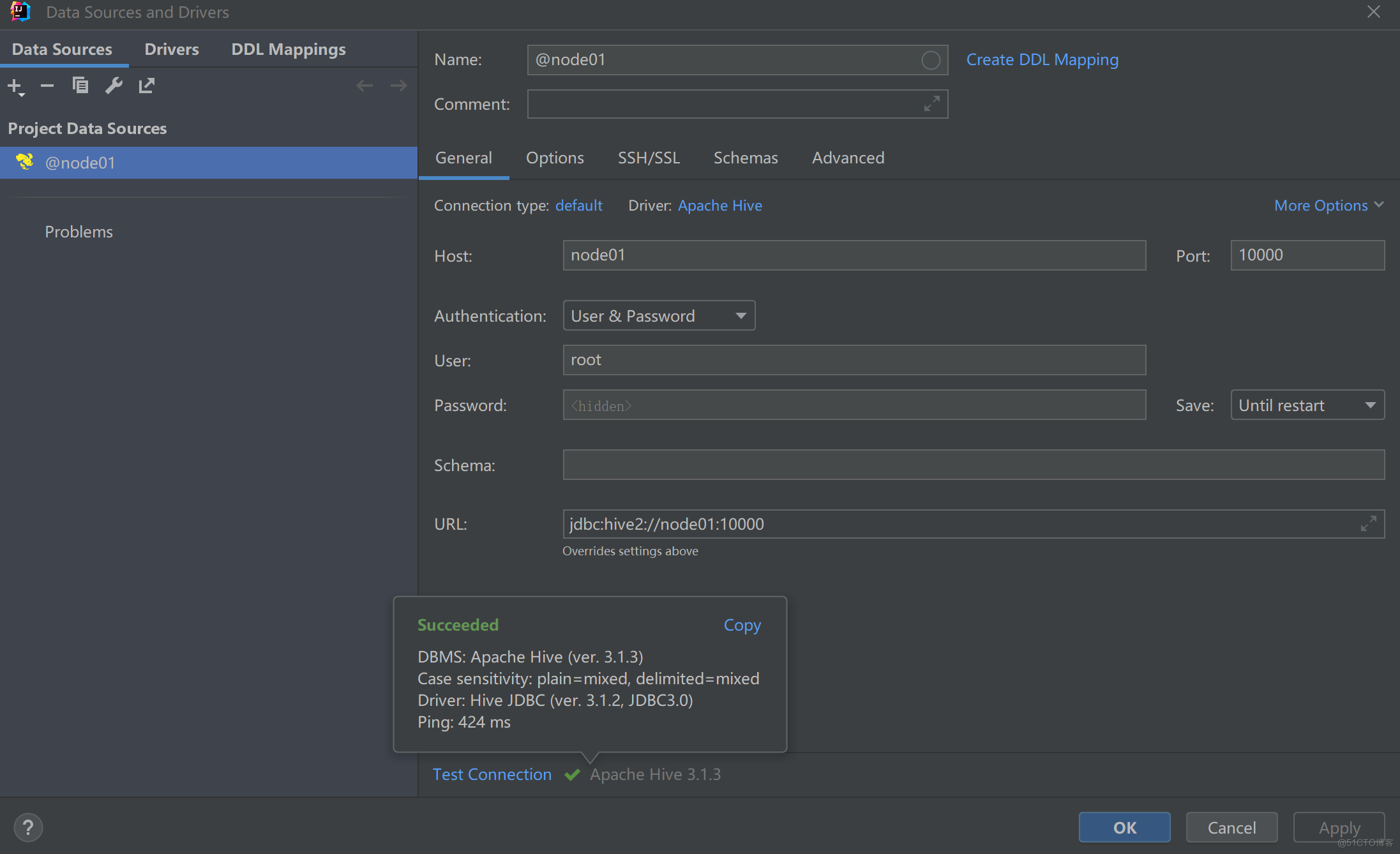

重启Hadoop、hiveserver2后再次连接测试

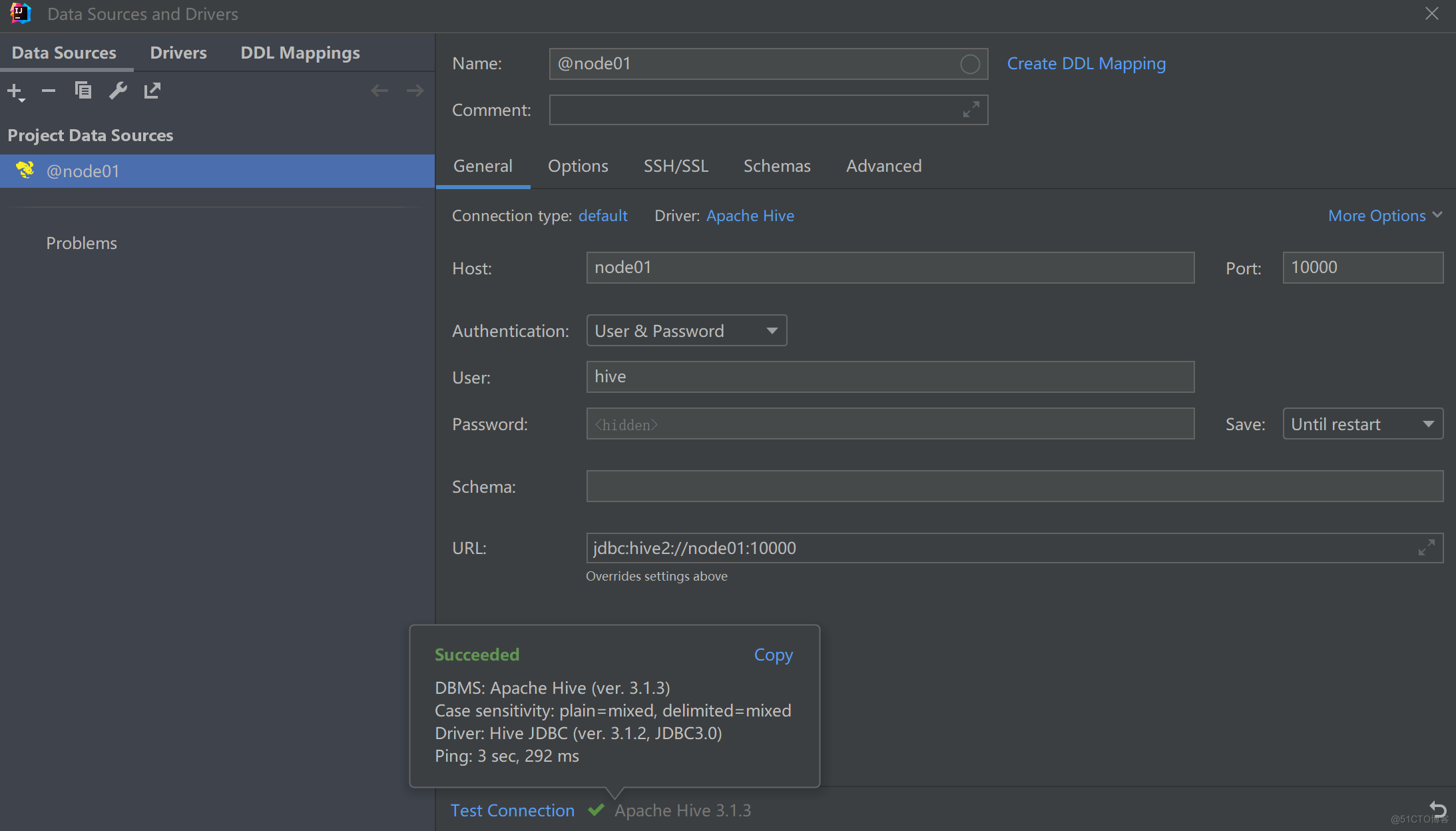

2.自定义身份验证类远程连接Hive

在Hive 中,默认情况下,没有启用用户验证机制,即hive默认的用户名和密码都是空。为了安全保证,可以开启用户、密码登录Hive,做法是自定义一个身份验证类

创建一个Java项目,并确保项目中包含所需的依赖项,如Hive的JDBC驱动程序

<dependency>

<groupId>org.apache.hive</groupId>

<artifactId>hive-jdbc</artifactId>

<version>3.1.3</version>

</dependency>

注意:应该使用与服务器使用的Hive JDBC版本保持一致。

创建一个实现 PasswdAuthenticationProvider 接口的类。

package cn.ybzy.demo;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hive.conf.HiveConf;

import org.apache.hive.service.auth.PasswdAuthenticationProvider;

import org.slf4j.Logger;

import javax.security.sasl.AuthenticationException;

public class MyHiveCustomPasswdAuthenticator implements PasswdAuthenticationProvider {

private Logger LOG = org.slf4j.LoggerFactory.getLogger(MyHiveCustomPasswdAuthenticator.class);

private static final String HIVE_JDBC_PASSWD_AUTH_PREFIX = "hive.jdbc_passwd.auth.%s";

private Configuration conf = null;

@Override

public void Authenticate(String userName, String passwd)

throws AuthenticationException {

LOG.info("Hive 用户: " + userName + " 尝试登录");

String passwdConf = getConf().get(String.format(HIVE_JDBC_PASSWD_AUTH_PREFIX, userName));

if (passwdConf == null) {

String message = "找不到对应用户的密码配置, 用户:" + userName;

LOG.info(message);

throw new AuthenticationException(message);

}

if (!passwd.equals(passwdConf)) {

String message = "用户名和密码不匹配, 用户:" + userName;

throw new AuthenticationException(message);

}

}

public Configuration getConf() {

if (conf == null) {

this.conf = new Configuration(new HiveConf());

}

return conf;

}

public void setConf(Configuration conf) {

this.conf = conf;

}

}

将该Java项目打包,同时上传到Hive的lib目录

mv hive/MyHiveCustomPasswdAuthenticator.jar hive/lib/

修改hive-site.xml,进行配置

<!-- 使用自定义远程连接用户名和密码 -->

<property>

<name>hive.server2.authentication</name>

<value>CUSTOM</value><!--默认为none,修改成CUSTOM-->

</property>

<!-- 指定解析类 -->

<property>

<name>hive.server2.custom.authentication.class</name>

<value>cn.ybzy.demo.MyHiveCustomPasswdAuthenticator</value>

</property>

<!--设置用户名和密码 name属性中root是用户名 value属性中时密码-->

<property>

<name>hive.jdbc_passwd.auth.hive</name>

<value>hive123</value>

</property>

3.权限问题

在IDEA中远程连接Hive,并操作时,可能会出现如下异常:

ERROR --- [ HiveServer2-Background-Pool: Thread-440] org.apache.hadoop.hive.metastore.utils.MetaStoreUtils (line: 166) : Got exception: org.apache.hadoop.security.AccessControlException Permission denied: user=hive, access=WRITE, inode="/hive/warehouse":root:supergroup:drwxr-xr-x

at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.check(FSPermissionChecker.java:399)

at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkPermission(FSPermissionChecker.java:255)

at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkPermission(FSPermissionChecker.java:193)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkPermission(FSDirectory.java:1855)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkPermission(FSDirectory.java:1839)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkAncestorAccess(FSDirectory.java:1798)

at org.apache.hadoop.hdfs.server.namenode.FSDirMkdirOp.mkdirs(FSDirMkdirOp.java:59)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.mkdirs(FSNamesystem.java:3175)

at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.mkdirs(NameNodeRpcServer.java:1145)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolServerSideTranslatorPB.mkdirs(ClientNamenodeProtocolServerSideTranslatorPB.java:714)

at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$ClientNamenodeProtocol$2.callBlockingMethod(ClientNamenodeProtocolProtos.java)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine.java:527)

at org.apache.hadoop.ipc.RPC$Server.call(RPC.java:1036)

at org.apache.hadoop.ipc.Server$RpcCall.run(Server.java:1000)

at org.apache.hadoop.ipc.Server$RpcCall.run(Server.java:928)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1729)

at org.apache.hadoop.ipc.Server$Handler.run(Server.java:2916)

原因:

在操作Hive时,其会去操作HDFS,而登录Hive的用户没有权限操作

解决方案:

要确保Hive用户(例如 hive)能够操作HDFS。

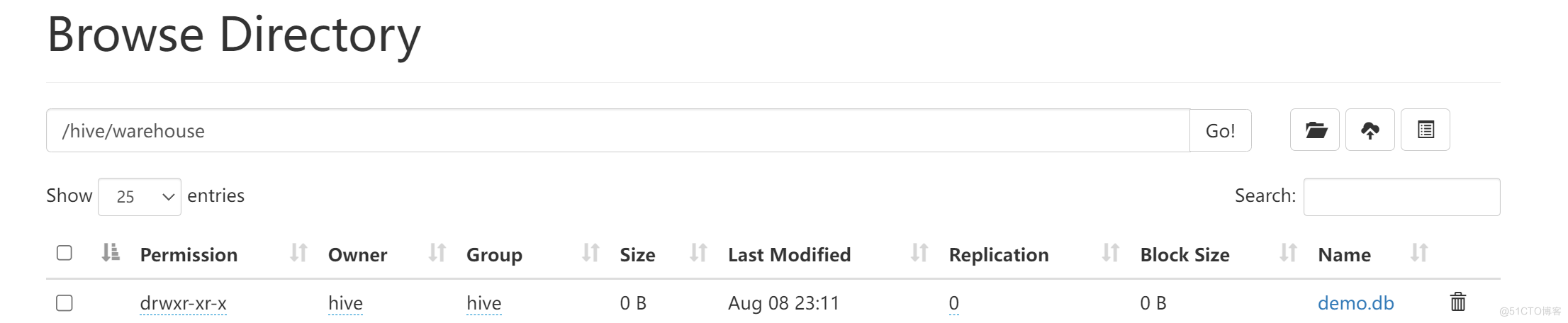

由于Hive配置了metastore数据存储位置,例如/hive/warehouse,因此,需要对该目录授予相应权限

hadoop fs -chown hive:hive /hive/warehouse

在IDEA中创建一个数据库:

create database demo;

查看HDFS:

4.额外说明

除了上述方式外,Hive还提供了Kerberos 或 LDAP高级认证方式,有点复杂,暂且不讨论。

另外,在较早版本的 Hive(2.x 及更早版本)中可以通过以下配置Hive远程连接的用户名与密码

<property>

<name>hive.server2.authentication</name>

<value>PASSWORD</value>

</property>

<property>

<name></name>

<value>hive</value>

</property>

<property>

<name>hive.server2.authentication.user.password</name>

<value>hive123</value>

</property>

<property>

<name>hive.cli.print.current.db</name>

<value>true</value>

</property>

<property>

<name>hive.server2.thrift.port</name>

<value>10000</value>

</property>

<property>

<name>hive.server2.thrift.bind.host</name>

<value>node01</value>

</property>