试验环境

Windows:IDEA

Linux:Kafka,Zookeeper

POM和Demo

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.yk</groupId>

<artifactId>flink</artifactId>

<version>1.0-SNAPSHOT</version>

<properties>

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

<flink.version>1.6.1</flink.version>

<slf4j.version>1.7.7</slf4j.version>

<log4j.version>1.2.17</log4j.version>

</properties>

<dependencies>

<!--******************* flink *******************-->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-java</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-java_2.11</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-clients_2.11</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-kafka-0.11_2.11</artifactId>

<version>${flink.version}</version>

<scope> compile</scope>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-filesystem_2.11</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-core</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs</artifactId>

<version>3.1.1</version>

</dependency>

<!--alibaba fastjson-->

<dependency>

<groupId>com.alibaba</groupId>

<artifactId>fastjson</artifactId>

<version>1.2.51</version>

</dependency>

<!--******************* 日志 *******************-->

<dependency>

<groupId>org.slf4j</groupId>

<artifactId>slf4j-log4j12</artifactId>

<version>${slf4j.version}</version>

<scope>runtime</scope>

</dependency>

<dependency>

<groupId>log4j</groupId>

<artifactId>log4j</artifactId>

<version>${log4j.version}</version>

<scope>runtime</scope>

</dependency>

<!--******************* kafka *******************-->

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-clients</artifactId>

<version>1.1.1</version>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.3</version>

<configuration>

<source>1.8</source>

<target>1.8</target>

</configuration>

</plugin>

<!--打jar包-->

<plugin>

<artifactId>maven-assembly-plugin</artifactId>

<configuration>

<archive>

<manifest>

<mainClass>com.allen.capturewebdata.Main</mainClass>

</manifest>

</archive>

<descriptorRefs>

<descriptorRef>jar-with-dependencies</descriptorRef>

</descriptorRefs>

</configuration>

</plugin>

</plugins>

</build>

</project>

package flink.kafkaFlink;

import java.util.Properties;

import org.apache.flink.streaming.api.TimeCharacteristic;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.connectors.kafka.FlinkKafkaConsumer010;

public class KafkaDemo {

public static void main(String[] args) throws Exception {

// set up the streaming execution environment

final StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

//默认情况下,检查点被禁用。要启用检查点,请在StreamExecutionEnvironment上调用enableCheckpointing(n)方法,

// 其中n是以毫秒为单位的检查点间隔。每隔5000 ms进行启动一个检查点,则下一个检查点将在上一个检查点完成后5秒钟内启动

env.enableCheckpointing(500);

env.setStreamTimeCharacteristic(TimeCharacteristic.EventTime);

Properties properties = new Properties();

properties.setProperty("bootstrap.servers", "hadoop01:9092");//kafka的节点的IP或者hostName,多个使用逗号分隔

properties.setProperty("zookeeper.connect", "hadoop01:2181");//zookeeper的节点的IP或者hostName,多个使用逗号进行分隔

properties.setProperty("group.id", "test-consumer-group");//flink consumer flink的消费者的group.id

System.out.println("11111111111");

FlinkKafkaConsumer010<String> myConsumer = new FlinkKafkaConsumer010<String>("test", new org.apache.flink.api.common.serialization.SimpleStringSchema(), properties);

// FlinkKafkaConsumer010<String> myConsumer = new FlinkKafkaConsumer010<String>("test",new SimpleStringSchema(),properties);//test0是kafka中开启的topic

myConsumer.assignTimestampsAndWatermarks(new CustomWatermarkEmitter());

DataStream<String> keyedStream = env.addSource(myConsumer);//将kafka生产者发来的数据进行处理,本例子我进任何处理

System.out.println("2222222222222");

keyedStream.print();//直接将从生产者接收到的数据在控制台上进行打印

// execute program

System.out.println("3333333333333");

env.execute("Flink Streaming Java API Skeleton");

}

}

package flink.kafkaFlink;

import org.apache.flink.streaming.api.functions.AssignerWithPunctuatedWatermarks;

import org.apache.flink.streaming.api.watermark.Watermark;

public class CustomWatermarkEmitter implements AssignerWithPunctuatedWatermarks<String> {

private static final long serialVersionUID = 1L;

public long extractTimestamp(String arg0, long arg1) {

if (null != arg0 && arg0.contains(",")) {

String parts[] = arg0.split(",");

return Long.parseLong(parts[0]);

}

return 0;

}

public Watermark checkAndGetNextWatermark(String arg0, long arg1) {

if (null != arg0 && arg0.contains(",")) {

String parts[] = arg0.split(",");

return new Watermark(Long.parseLong(parts[0]));

}

return null;

}

}

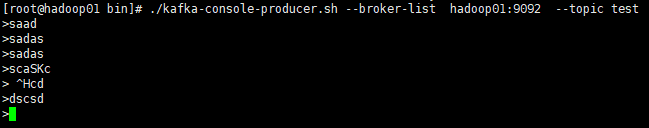

在云主机上启动服务

1.启动zookeeper;

2.启动Kafka;

3.创建topic;

4.启动生产者。

bin/zkServer.sh start bin/kafka-server-start.sh config/server.properties bin/kafka-topics.sh --create --zookeeper hadoop01:2181 --replication-factor 1 --partitions 1 --topic test bin/kafka-console-producer.sh --broker-list hadoop01:9092 --topic test

运行程序KafkaDemo

1.在kafka的生产者界面输入内容

2.查看IDEA的控制台