import paddle

import paddle.nn.functional as F

from paddle.vision.transforms import ToTensor

from paddle import fluid

import paddle.nn as nn

print(paddle.__version__)

2.0.2

transform = ToTensor()

cifar10_train = paddle.vision.datasets.Cifar10(mode='train',

transform=transform)

cifar10_test = paddle.vision.datasets.Cifar10(mode='test',

transform=transform)

# 构建训练集数据加载器

train_loader = paddle.io.DataLoader(cifar10_train, batch_size=64, shuffle=True)

# 构建测试集数据加载器

test_loader = paddle.io.DataLoader(cifar10_test, batch_size=64, shuffle=True)

Cache file /home/aistudio/.cache/paddle/dataset/cifar/cifar-10-python.tar.gz not found, downloading https://dataset.bj.bcebos.com/cifar/cifar-10-python.tar.gz

Begin to download

Download finished

class MyNet(paddle.nn.Layer):

def __init__(self, num_classes=10):

super(MyNet, self).__init__()

self.conv1 = paddle.nn.Conv2D(in_channels=3, out_channels=32, kernel_size=(3, 3), stride=1, padding = 1)

# self.pool1 = paddle.nn.MaxPool2D(kernel_size=2, stride=2)

self.conv2 = paddle.nn.Conv2D(in_channels=32, out_channels=64, kernel_size=(3,3), stride=2, padding = 0)

# self.pool2 = paddle.nn.MaxPool2D(kernel_size=2, stride=2)

self.conv3 = paddle.nn.Conv2D(in_channels=64, out_channels=64, kernel_size=(3,3), stride=2, padding = 0)

# self.DropBlock = DropBlock(block_size=5, keep_prob=0.9, name='le')

self.conv4 = paddle.nn.Conv2D(in_channels=64, out_channels=64, kernel_size=(3,3), stride=2, padding = 1)

self.flatten = paddle.nn.Flatten()

self.linear1 = paddle.nn.Linear(in_features=1024, out_features=64)

self.linear2 = paddle.nn.Linear(in_features=64, out_features=num_classes)

def forward(self, x):

x = self.conv1(x)

x = F.relu(x)

# x = self.pool1(x)

# print(x.shape)

x = self.conv2(x)

x = F.relu(x)

# x = self.pool2(x)

# print(x.shape)

x = self.conv3(x)

x = F.relu(x)

# print(x.shape)

# x = self.DropBlock(x)

x = self.conv4(x)

x = F.relu(x)

# print(x.shape)

x = self.flatten(x)

x = self.linear1(x)

x = F.relu(x)

x = self.linear2(x)

return x

# 可视化模型

cnn2 = MyNet()

model2 = paddle.Model(cnn2)

model2.summary((64, 3, 32, 32))

---------------------------------------------------------------------------

Layer (type) Input Shape Output Shape Param #

===========================================================================

Conv2D-1 [[64, 3, 32, 32]] [64, 32, 32, 32] 896

Conv2D-2 [[64, 32, 32, 32]] [64, 64, 15, 15] 18,496

Conv2D-3 [[64, 64, 15, 15]] [64, 64, 7, 7] 36,928

Conv2D-4 [[64, 64, 7, 7]] [64, 64, 4, 4] 36,928

Flatten-1 [[64, 64, 4, 4]] [64, 1024] 0

Linear-1 [[64, 1024]] [64, 64] 65,600

Linear-2 [[64, 64]] [64, 10] 650

===========================================================================

Total params: 159,498

Trainable params: 159,498

Non-trainable params: 0

---------------------------------------------------------------------------

Input size (MB): 0.75

Forward/backward pass size (MB): 25.60

Params size (MB): 0.61

Estimated Total Size (MB): 26.96

---------------------------------------------------------------------------

{'total_params': 159498, 'trainable_params': 159498}

# 配置模型

from paddle.metric import Accuracy

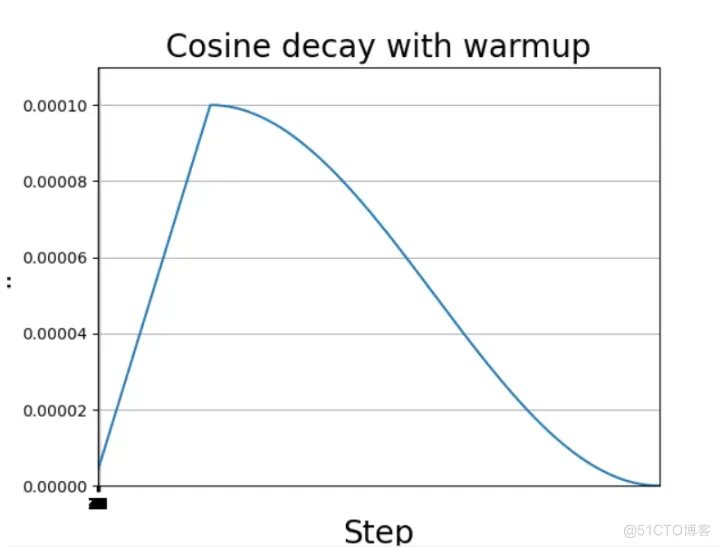

scheduler = CosineWarmup(

lr=0.5, step_each_epoch=100, epochs=8, warmup_steps=20, start_lr=0, end_lr=0.5, verbose=True)

optim = paddle.optimizer.SGD(learning_rate=scheduler, parameters=model2.parameters())

model2.prepare(

optim,

paddle.nn.CrossEntropyLoss(),

Accuracy()

)

# 模型训练与评估

model2.fit(train_loader,

test_loader,

epochs=10,

verbose=1,

)

The loss value printed in the log is the current step, and the metric is the average value of previous step.

Epoch 1/3

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/layers/utils.py:77: DeprecationWarning: Using or importing the ABCs from 'collections' instead of from 'collections.abc' is deprecated, and in 3.8 it will stop working

return (isinstance(seq, collections.Sequence) and

step 782/782 [==============================] - loss: 1.9828 - acc: 0.2280 - 106ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 157/157 [==============================] - loss: 1.5398 - acc: 0.3646 - 35ms/step

Eval samples: 10000

Epoch 2/3

step 782/782 [==============================] - loss: 1.7682 - acc: 0.3633 - 106ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 157/157 [==============================] - loss: 1.7934 - acc: 0.3867 - 34ms/step

Eval samples: 10000

Epoch 3/3

step 782/782 [==============================] - loss: 1.3394 - acc: 0.4226 - 105ms/step

Eval begin...

The loss value printed in the log is the current batch, and the metric is the average value of previous step.

step 157/157 [==============================] - loss: 1.4539 - acc: 0.3438 - 35ms/step

Eval samples: 10000